Some of my research involves showing that existing measures are wrong or that published findings are incorrect.

Critical scrutiny is vital for science.

Some of my research involves showing that existing measures are wrong or that published findings are incorrect.

Critical scrutiny is vital for science.

One fallacy is measuring the wrong thing, because it seems easier. To measure how protest changes over time or varies over space, the usual method is to count up the number of events, because the number of participants is harder to ascertain. ‘Size Matters: Quantifying Protest by Counting Participants’ (Sociological Methods and Research, 2018) challenged this conventional practice. Theoretically we are interested in actions, not events. The correlation between these two measures is surprisingly low, and so the number of events cannot be used as a proxy for protesters. An additional bonus of using the total number of participants is that it is robust against the undercounting of events, because events that are undercounted contribute only few to the total number of participants.

Another fallacy is using survey data without considering the underlying questions and how respondents construed them. ‘Has Protest Increased Since the 1970s? How a Survey Question Can Construct a Spurious Trend’ (British Journal of Sociology, 2015) showed that the standard battery of questions (used for example in the World Values Survey) did not adequately capture participation in strikes. The trend was purely an artifact of measurement, because it did not take into account the decline of strikes since the 1970s.

In this example, survey questions were misused by social scientists. Worse is when the question was misunderstood by respondents. The 2021 Census of England and Wales was the first to count the transgender population. ‘Gender Identity in the 2021 Census of England and Wales: How a Flawed Question Created Spurious Data’ (Sociology, 2024) demonstrated that the question had confused many respondents who spoke English poorly. The argument was reinforced by ‘Comparing Transgender Identities in the Census of Scotland and the Census of England and Wales’ (British Journal of Sociology, 2026). My research forced the Office for National Statistics to withdraw these figures from the official statistics. Read the full story here.

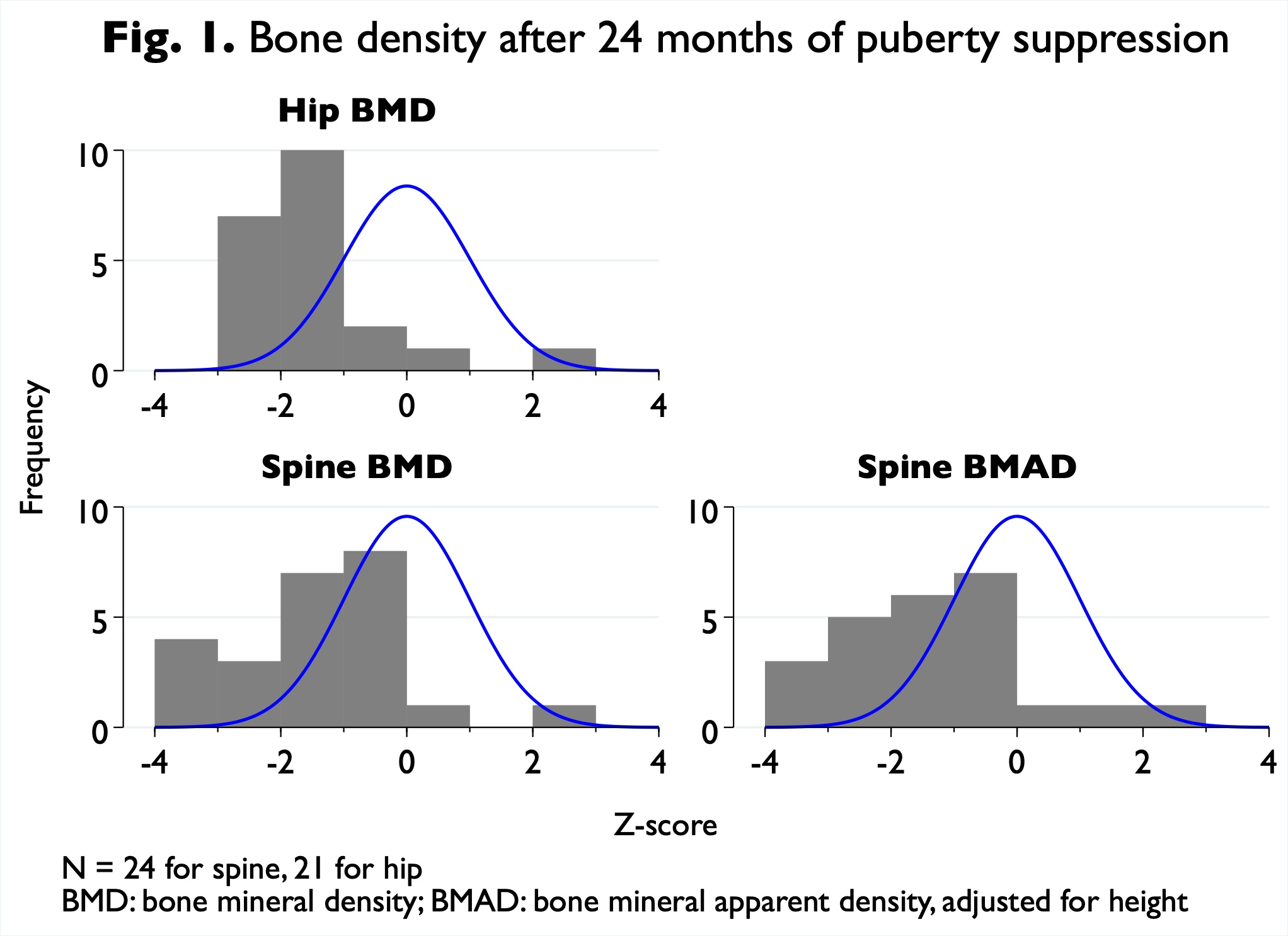

Investigating the evidence for puberty suppression as an intervention for children who identify as transgender, I discovered that the medical literature was riddled with errors—and worse, that clinicians often refused to publish inconvenient evidence. The Tavistock and Portman NHS Foundation Trust’s Gender Identity Development Service launched a study to replicate findings from the Netherlands. Its results had been available since 2016, but I was the the first to publish data showing that the replication had failed: ‘Gender Dysphoria and Psychological Functioning in Adolescents Treated with GnRHa: Comparing Dutch and English Prospective Studies’ (Archives of Sexual Behavior, 2020). Articles frequently misrepresent their findings. To take one example, an article by the Tavistock’s researchers implied that patients undergoing puberty suppression had better psychological functioning than patients having only counselling. My letter (Journal of Sexual Medicine, 2019) pointed out that the difference was not statistically significant. As another example, the deleterious effect of puberty suppression on bone mineral density was downplayed by the Tavistock's clinicians. ‘Revisiting the Effect of GnRH Analogue Treatment on Bone Mineral Density in Young Adolescents with Gender Dysphoria’ (Journal of Pediatric Endocrinology and Metabolism, 2021) demonstrates that up to one in three patients ended up with such low bone density, compared to the norm for their sex and age, to put them at risk of osteoporosis. My further critiques of this literature can be read here.

A more typical fallacy in social science is multivariate analysis that omits crucial variables. An article in the flagship journal of political science claimed that Civil Rights protest in the early 1960s led to such enduring changes in public opinion as to be discernible half a century later. ‘Did Local Civil Rights Protest Liberalize Whites’ Racial Attitudes?’ (with Christopher Barrie and Kenneth T. Andrews, Research and Politics, 2020) refutes this claim, which depended on the author’s failure to measure college students in 1960 (which predict protest) and college education in the 2010s (which predicts liberal attitudes).

My research follows the principles of open science. Kenneth T. Andrews and I deposited our dataset on the 1960 protest sit-ins with the Inter-university Consortium for Political and Social Research, and it has been used in doctoral theses and articles. ‘Has Protest Increased Since the 1970s?’ was the first article published in the British Journal of Sociology to provide data in an online supplement. All my recent publications provide data and Stata code either on the journal’s website or on the Harvard dataverse, enabling anyone to replicate the results. ‘How reproducible are findings on social movements and collective protest?’ (paper to European Consortium for Social Research, 2024) offers some reflections.